Computers have been evolving rapidly since their inception over half a century ago. In that time, they've gone from hulking behemoths that filled entire rooms to sleek devices that can fit in our pockets. A lot of that progress is thanks to innovations in processor design.

One such innovation is the microprocessor. Microprocessors are tiny chips that contain all the computing power of a traditional processor, but in a much smaller package. This innovation allowed computers to get smaller and more portable, while still maintaining their processing power.

Another innovation that has helped to make computers more powerful is the development of multicore processors. A multicore processor is a single chip that contains multiple processors, or cores. This allows a single computer to have the processing power of multiple traditional processors.

The development of these two innovations has helped to make computers smaller, more portable, and more powerful than ever before. Thanks to these innovations, we now have devices like laptops, smartphones, and tablets that are incredibly powerful, yet still small enough to fit in our pockets.

A fresh viewpoint: Innovation Why Is It Important

What was the first innovation that helped to make computers more powerful?

The first innovation that helped to make computers more powerful was the creation of the microprocessor. The microprocessor is a computer chip that contains all the necessary circuitry to perform arithmetic and logic operations. This single chip greatly reduced the size and cost of computers while increasing their speed and functionality.

How have innovations in computer hardware helped to make computers more powerful?

Innovations in computer hardware have helped to make computers more powerful in several ways. Perhaps the most obvious way is by increasing the processing speed of CPUs. This has led to faster overall performance and shorter wait times for tasks to be completed. Additionally, improvements in memory and storage capacity have allowed for more data to be stored on a single device and has made it possible to access that data more quickly.

The introduction of new types of input and output devices has also made computers more powerful. For example, the mouse and the graphical user interface were both innovations that greatly increased the usability of computers. The mouse made it possible to point and click on objects on the screen, which made it much easier to select commands and navigate menus. The graphical user interface made it possible to display information in a more user-friendly way, which made it easier to understand and use.

Finally, the development of networking technologies has made computers more powerful by connecting them to the internet and to each other. This has allowed for a vast amount of information to be shared between computers and has made it possible to access files and applications from any location. Additionally, it has made it possible to work on collaborative projects with people from all over the world.

Worth a look: Are There Any Computers Not Made in China?

How have innovations in computer software helped to make computers more powerful?

Computers are one of the most incredible inventions of the 20th century. They've come a long way since they were first created and they continue to evolve at an amazing rate. One of the biggest reasons for their continued success is the way that they've been able to continually improve their software. New innovations in computer software have helped to make computers more powerful in a number of ways.

perhaps the most important way that computer software has helped to make computers more powerful is by making them more user-friendly. In the early days of computing, only those with the most technical expertise could use computers. However, as software has become more sophisticated, it has become much easier for the average person to use a computer. This has opened up a whole world of possibilities for how computers can be used.

Another way that computer software has made computers more powerful is by increasing their processing power. Early computers were incredibly slow by today's standards. This made them mostly useless for anything but the most basic tasks. However, as software has become more efficient, it has been able to make use of the ever-increasing processing power of computers. This has allowed computers to be used for much more complex tasks than ever before.

Finally, computer software has also helped to make computers more connected. In the early days of computing, computers were mostly isolated devices. However, as software has become more sophisticated, it has allowed computers to connect to each other and share data. This has made it possible to do things like create a global network of computers, which has greatly increased the power of computing.

In short, innovations in computer software have helped to make computers more powerful in a number of ways. They have made them more user-friendly, increased their processing power, and made them more connected. This has all helped to make computers an essential part of our lives and has helped to change the world in a number of ways.

What is Moore's Law and how has it helped to make computers more powerful?

Moore's Law is an observed phenomenon in the history of computing hardware. It is the observation that the number of transistors on an integrated circuit (IC) doubles approximately every two years. This trend has continued for several generations of ICs, and has resulted in more and more powerful computing devices.

The trend was first observed by Gordon Moore, one of the founders of Intel, in a 1965 paper. He predicted that the number of transistors on a chip would double every year for the next ten years. This prediction turned out to be too optimistic, and was later revised to doubling every two years.

Despite being 15 years old, Moore's Law is still going strong and is the key driver of the computer industry. The ever-increasing number of transistors on a chip enables more functionality to be added to devices, and also allows for existing functionality to be performed faster and more efficiently.

One of the most dramatic examples of Moore's Law in action is the smartphone. In 2007, the first iPhone was released with an IC that contained around 200 million transistors. Just ten years later, the iPhone X contains around 3.3 billion transistors. This increase in transistor count has allowed for the addition of many features that were not possible in the first iPhone, such as a high-resolution screen, a powerful processor, and a sophisticated camera.

As well as making devices more powerful, Moore's Law has also helped to make them smaller and more energy-efficient. This is because each new generation of ICs is able to pack more transistors into the same area, thanks to advances in manufacturing technology. This miniaturization has been a key driver of the digital revolution, as ever-smaller devices are able to offer ever-greater computing power.

It is often said that Moore's Law is about to come to an end, as the physical limitations of transistor scaling mean that it is no longer possible to double the number of transistors on a chip every two years. However, there are still plenty of improvements that can be made in terms of transistor design and manufacturing, so it is likely that Moore's Law will continue to hold true for many years to come.

Recommended read: How to Unsync Two Computers?

What are the different types of computer architectures and how do they help to make computers more powerful?

Computers are amazing devices that have revolutionized the way we live and work. They are capable of performing incredibly complex tasks, and they continue to get more powerful every year. But how do they work? How do computers get so powerful?

The answer lies in their architecture. Computer architects design the fundamental structure of computers, and they play a vital role in making them more powerful. There are different types of computer architectures, and each has its own advantages and disadvantages.

The most common type of computer architecture is the Von Neumann architecture. It was first proposed by mathematician and physicist John von Neumann in the early 1950s, and it is still used in many modern computers. The main advantage of the Von Neumann architecture is that it is relatively simple. This makes it easy to design and build computers that are based on this architecture. However, the Von Neumann architecture also has some disadvantages. One is that it is not very efficient. Another is that it is not well suited for parallel processing, which is a type of processing that can be used to make computers even more powerful.

The Harvard architecture is another type of computer architecture. It is named after the Harvard Mark I computer, which was one of the first computers to use this architecture. The Harvard architecture is more efficient than the Von Neumann architecture, and it is better suited for parallel processing. However, it is more complex, which makes it more difficult to design and build computers that are based on this architecture.

The most recent type of computer architecture is the neural architecture. Neural architectures are modeled on the brain, and they are designed to be very efficient. They are also well suited for parallel processing. However, they are very complex, and they are not yet widely used.

All of these different types of computer architectures have their own advantages and disadvantages. The type of architecture that is used in a particular computer depends on the application for which the computer will be used. For example, a computer that is designed for scientific calculations will likely use a different architecture than a computer that is designed for playing video games.

Computer architectures continue to evolve, and new architectures are being proposed all the time. As computer architects continue to experiment and innovate, we can expect computers to become even more powerful in the future.

How have parallel computing and distributed computing helped to make computers more powerful?

Parallel computing and distributed computing are two important methods for making computers more powerful. Parallel computing is a type of computing where several processors work on the same task at the same time. This can speed up the execution of a task by a factor of the number of processors used. For example, if four processors are used, the task will be completed four times faster. Distributed computing is a type of computing where tasks are divided among several computers in a network. This can speed up the execution of a task by a factor of the number of computers used. For example, if four computers are used, the task will be completed four times faster.

If this caught your attention, see: What Is an Important Number in Computers

What is hardware acceleration and how has it helped to make computers more powerful?

Computers are becoming more powerful day by day. The hardware acceleration is one of the main reasons for this. Hardware acceleration is the use of specialised hardware to perform some tasks much faster than general-purpose processors. This is usually done by using a purpose-built chip or dedicated circuit board. It can also be done by using a field-programmable gate array (FPGA).

The main benefit of hardware acceleration is that it can significantly improve the performance of certain tasks. This can make a big difference for applications that are time-sensitive, such as real-time video processing or data analysis. Another benefit is that hardware acceleration can free up the main processor to do other tasks. This can improve the overall efficiency of a system.

One of the key ways in which hardware acceleration has helped to make computers more powerful is by assisting with the development of new algorithms. Algorithms are a key part of many computer applications. They are a set of instructions that tell a computer how to do something. The development of new and more efficient algorithms is vital for the continued improvement of computer performance.

Hardware acceleration can be used to significantly speed up the development of new algorithms. This is because hardware acceleration can be used to test algorithms very quickly. This means that more algorithms can be tested in a shorter period of time. As a result, more efficient algorithms can be developed in a shorter time frame.

Another way in which hardware acceleration has helped to make computers more powerful is by enabling the development of new and more complex applications. Complex applications require more processing power than simple applications.Hardware acceleration can be used to provide the extra processing power required to run complex applications.

One example of a complex application that has been developed with the help of hardware acceleration is video editing software. Video editing software is a complex application that requires a significant amount of processing power. Hardware acceleration has been used to provide the extra processing power required to run video editing software. As a result, video editing software has become more powerful and sophisticated.

Overall, hardware acceleration has helped to make computers more powerful by assisting with the development of new algorithms, by enabling the development of new and more complex applications, and by providing the extra processing power required to run these applications.

Additional reading: Agile Teams Make Time

What are the different types of computer memory and how do they help to make computers more powerful?

Computer memory is a vital component that allows computers to function and store the data that is necessary for them to operate. There are different types of computer memory, each with their own unique purpose that helps to make computers more powerful. The different types of computer memory include Random Access Memory (RAM), Read Only Memory (ROM), and Flash Memory.

Random Access Memory (RAM) is the most common type of computer memory. It is used to store the data that is currently being used by the computer. When the computer is turned off, the data in RAM is lost. RAM is measured in megabytes (MB) or gigabytes (GB). The more RAM a computer has, the more data it can store and the faster it can operate.

Read Only Memory (ROM) is a type of computer memory that cannot be changed. It is used to store the computer’s operating system and other essential data. ROM is measured in megabytes (MB) or gigabytes (GB). A computer with more ROM can store more data and operate more quickly.

Flash Memory is a type of computer memory that can be erased and rewritten. It is often used to store data that is not frequently used. Flash memory is measured in megabytes (MB) or gigabytes (GB). A computer with more flash memory can store more data and operate more quickly.

What are the different types of computer processors and how do they help to make computers more powerful?

Computer processors are the central processing units (CPUs) of computers and are responsible for carrying out the instructions of computer programs. Modern processors are transistor-based and are very powerful, capable of carrying out billions of instructions per second. They have a number of different design features that help to make them more powerful, including multiple cores, pipelining, and out-of-order execution.

Multiple cores is a feature of some processors that allows for multiple processing units to be present on a single chip. This enables the processor to carry out multiple instructions at the same time, increasing its overall speed and efficiency.

Pipelining is another feature of processors that helps to improve their performance. It involves breaking down instructions into smaller parts and then carrying out each part in parallel. This enables the processor to carry out more instructions in a given period of time, again increasing its overall speed and efficiency.

Out-of-order execution is a feature of processors that allows them to execute instructions in an order that is different from the order in which they were received. This can help to improve performance by making better use of the processor's resources.

All of these features help to make processors more powerful and allow computers to carry out more complex tasks.

Discover more: Dropbox Paper Help

Frequently Asked Questions

What are the advantages of biocomputers?

Some key advantages of biocomputers are their energy efficiency, speed, and cost. They are also more likely to be commercialized soon than quantum computers are.

What are the components of computer hardware?

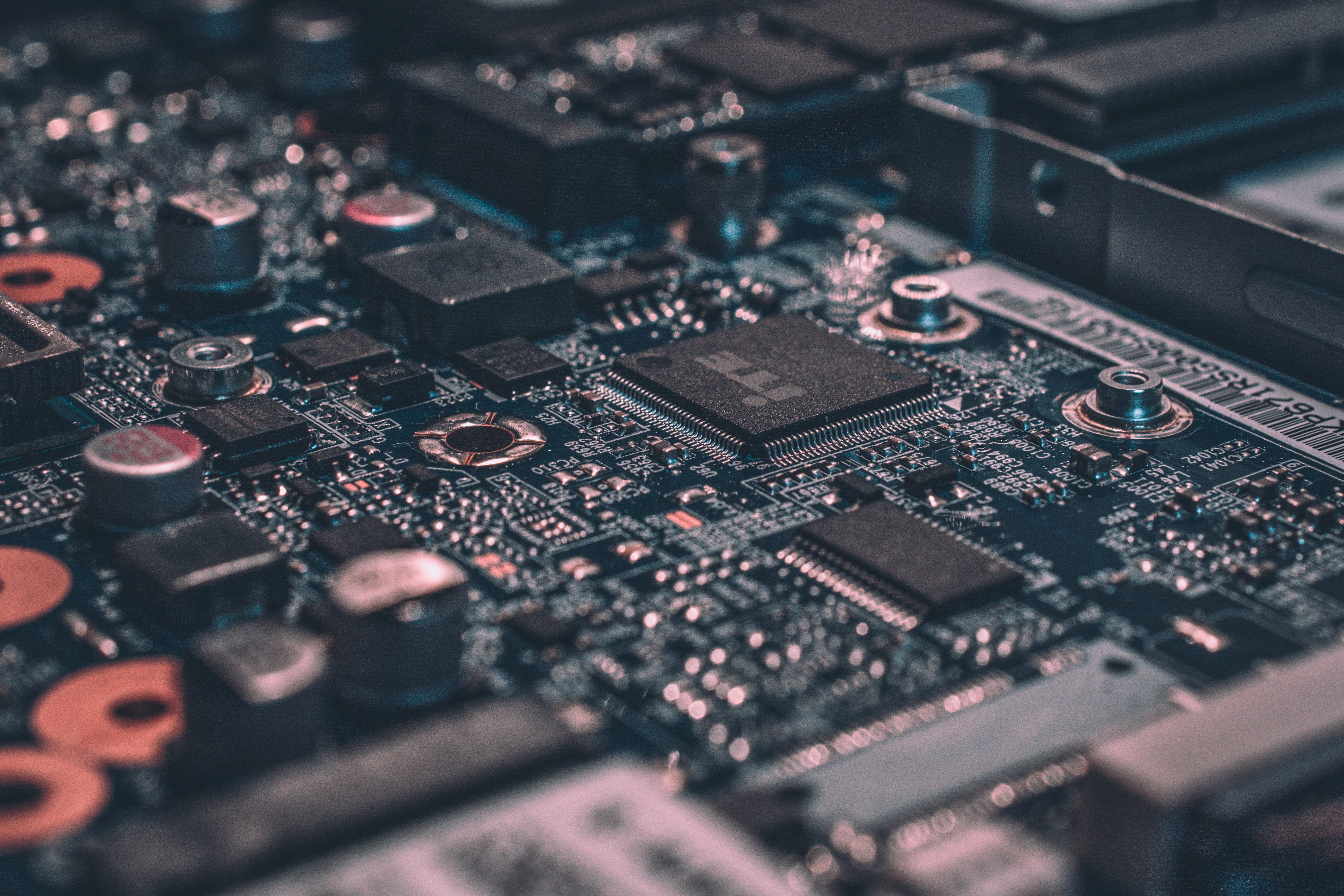

A computer's hardware includes the physical parts of a machine, such as the motherboard, power supply, keyboard, mouse and monitor.

What are the factors that affect computer performance?

1. The speed of the central processing unit (CPU) is one of the most important factors that affect computer performance. Older computers often have slower CPUs, while more recent models typically have faster CPUs. 2. The memory capacity of a computer is also important. A computer with more memory can hold more data and programs, speeding up the overall performance of the computer. 3. Input/output devices also affect computer performance. If a computer has a slow disk drive, for example, it will take longer for the computer to find and open files. 4. RAM size is another important factor that affects performance. More RAM means faster access to data and files on a computer. 5. Finally, other things constant, generation of the CPU typically affects computer performance.

What is the most important part of a computer?

The most important part of a computer is the CPU.

Why do laptops have different cases for different components?

Since laptops are portable, they have to be lightweight while still protecting the components. Laptop cases are designed specifically for each component in order to protect them from shock and other damage.

Sources

- https://www.techymixer.com/which-innovation-helped-to-make-computers-more-powerful/

- https://www.answers.com/computer-science/Which_innovation_helped_to_make_computers_more_powerful

- https://www.answers.com/Q/What_innovation_helped_make_computers_more_powerful

- https://www.answers.com/Q/What_innovations_helped_make_computers_more_powerful

- https://www.answers.com/Q/What_h_innovation_helped_to_make_computers_more_powerful

- https://scitechdaily.com/mit-expert-on-powerful-computers-and-innovation/

- https://science-atlas.com/faq/which-innovation-helped-to-make-computers-more-powerful/

- https://www.answers.com/Q/What_innovation_helped_to_make_computers_more_powerful

- https://historycooperative.org/first-computer/

- https://www.answers.com/Q/Major_innovation_of_computers

- https://www.computerhope.com/issues/ch001380.htm

- https://gigaom.com/2019/04/01/the-future-of-software-innovation-hardware-enabled-ai-ml-innovation/

- https://www.quora.com/Why-does-more-innovation-happen-in-software-than-hardware

- https://medium.com/purdue-engineering/computer-systems-challenge-how-to-share-innovations-faster-and-more-broadly-3640dcdfaf87

- https://history-computer.com/moores-law/

- https://www.sciencefocus.com/future-technology/moores-law/

- https://www.w3schools.in/computer-fundamentals/types-of-computer-architecture

- https://www.reddit.com/r/askscience/comments/ba0g1o/what_are_computer_architectures_and_how_are_they/

- https://pediaa.com/what-is-the-difference-between-parallel-and-distributed-computing/

- https://www.geeksforgeeks.org/difference-between-parallel-computing-and-distributed-computing/

- https://www.cs.uga.edu/research/content/parallel-and-distributed-computing

- https://www.weka.io/blog/gpu-acceleration/

- https://www.techtarget.com/whatis/definition/memory

- http://engineersedge.com/computer_technology/computer_memory_types.htm

- https://fsconline.info/different-types-of-processors-and-their-features/

Featured Images: pexels.com